Ice breakers in train-the-trainers events are a bit like the safety briefing on a flight: some people roll their eyes because they “already know it”, a few tune out immediately, but when it’s done well it quietly makes everything that follows smoother, safer, and more effective.

The love side is obvious. A well-chosen ice breaker reduces social friction, makes participation safer, and creates early “small wins” for people who arrive tired, sceptical, or unsure about their own competence. The hate side is also real. Ice breakers can feel forced, childish, culturally awkward, or simply like time theft—especially when participants are experienced professionals who have seen the same activities recycled for a decade.

Malo Google reklame:

In AI4VET we decided to treat this tension as design information: if we want educators to take GenAI seriously, our opening moments have to be purposeful, light, and visibly connected to the learning outcomes. That is where “GenAI-infused ice breakers” turned out to be surprisingly effective: they soften the room without pretending we are all best friends, and they demonstrate capability without turning the first hour into a tech lecture.

About AI4VET and the training we ran

AI4VET (“Transforming Vocational Education Through AI and Future Classroom Lab”) is an Erasmus+ KA220-VET Cooperation Partnership (partners from Poland, Austria, Croatia, Romania, and Lithuania). The project focuses on building a practical, ethical, and inclusive pathway for integrating Generative AI into VET through a structured framework, guidance, and future classroom lab approaches.

As part of the project, we designed a five-day transnational capacity-building training programme (“Generative AI and Transformative Educational Content”), structured as a micro-credential (50 hours / 2 ECTS) and organised into five modules: (1) Introduction to AI, (2) AI for content creation & lesson planning, (3) AI for student engagement & assessment, (4) AI for inclusion & accessibility, and (5) practical strategies & a personal action plan. The programme is deliberately hands-on and non-formal, with peer collaboration embedded throughout. Training for 15 educators took place in our institution – Public Open University Čakovec – in December 2025.

In that context, ice breakers were not “extra”. They were the first demonstration of the training’s core message: AI is not a topic to be explained; it is a tool to be used (responsibly) to create better learning experiences. If we opened with passive listening, we would be undermining our own premise.

Our GenAI-infused ice breakers

Below are the activities we used (or variants of them), written so you can lift them into your own VET/adult learning training. All can be done with Gemini, but they also work with ChatGPT, Copilot, Mistral, DeepSeek, etc. The key is not the brand – it’s the facilitation, the constraints you set, and the clarity about what “good participation” looks like.

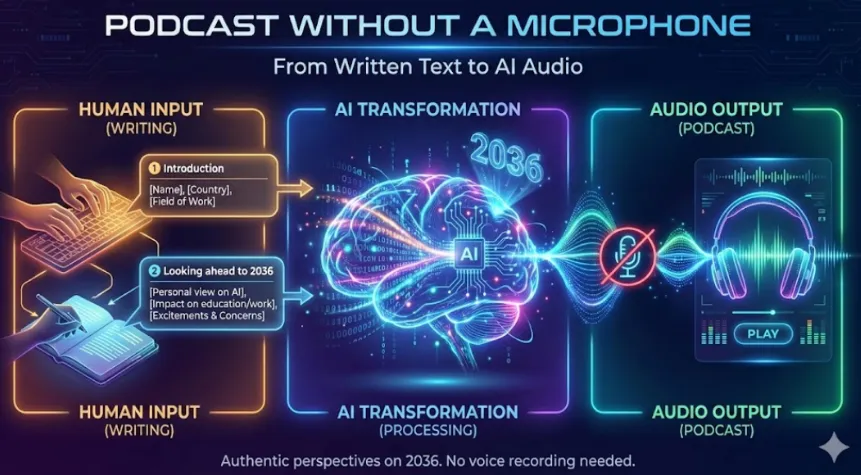

1. Podcast without a microphone

The concept is simple. Instead of asking participants to introduce themselves verbally, they write a short personal statement. These written contributions are then combined with a brief contextual introduction and transformed into an AI-generated audio preview using NotebookLM.

No one records their voice. No one performs in front of the group. Yet the final result is a shared audio artefact that captures the collective voice of the training cohort. The method reframes the traditional icebreaker. Rather than focusing on spontaneous speaking, it emphasises written reflection, authorship, and future-oriented thinking (and it does so without turning the room into a confessional).

The activity was structured in two short parts:

Part 1 – Personal Introduction

Participants wrote 2–3 sentences including their name, country, and professional context. This established identity and diversity within the group.

Part 2 – Looking Ahead to 2036

Participants wrote 4–5 sentences reflecting on where they believe artificial intelligence will be in ten years and how it might influence education or their professional practice.

All statements were written in the first person. The facilitator collected the texts and uploaded them into NotebookLM, together with a short introduction describing the project and the training context. The tool then generated an audio preview based strictly on those sources. The result was a concise podcast episode combining individual voices into a coherent narrative.

This method generated multiple pedagogical benefits within a single, well-structured activity. By replacing live speaking with written reflection, it lowered participation barriers and created psychological safety for all participants, while also reducing the anxiety associated with speaking English in front of an unfamiliar group – particularly as none of the participants were native speakers. The future-oriented prompt encouraged structured, forward-looking thinking about AI beyond immediate technical use, supporting deeper professional reflection. Transforming individual texts into a shared AI-generated audio narrative fostered a sense of collective identity and shared authorship. At the same time, participants experienced AI as a practical, transparent tool for content transformation rather than an abstract concept, strengthening experiential AI literacy. Finally, the resulting podcast served both as a reflective record of the learning event and as a dissemination asset for wider audiences.

2. Lost, found & translated (AI edition)

During the second day of the AI4VET transnational learning event participants engaged in an interactive icebreaker titled “Lost, Found & Translated (AI Edition)”. Each participant randomly selected an A4 sheet containing five questions written in languages not spoken by the group (Portuguese, Dutch, Korean, Georgian, Nepali and Swahili), along with clear instructions in English. The task required participants to translate the questions into their mother tongue using their smartphones, write the translations on paper, and then find people outside their national group who could answer the questions.

Crucially, questions were asked using Google Translate voice mode, allowing participants to speak in their own language while the tool played the question aloud in the interlocutor’s language. The “answers” were not written as statements; instead, participants recorded only the name of the person who answered, reinforcing the human-centred nature of the activity (the point was contact, not content capture).

Pedagogically, the icebreaker combined several intentional elements. It embedded experiential learning by making AI use part of the task rather than an explanation. It relied on social learning through short, repeated peer interactions rather than one big “share-out”. It embodied learning by requiring movement around the room, which matters more than we often admit in adult training. And it used inclusive design by ensuring everyone – regardless of language background – started from an equal position of not understanding the written questions without AI support.

The activity demonstrated, in a very concrete way, how AI can lower linguistic barriers, increase confidence and enable communication, while also highlighting its limitations (mishearings, odd phrasing, the occasional comic mistranslation). More importantly, it modelled a transferable teaching approach: using simple tools, clear structure and purposeful interaction to support learning in multilingual and diverse adult education settings. “Lost, Found & Translated” showed that meaningful AI integration does not require complex systems or advanced technical knowledge; it requires good task design and clear facilitation choices.

3. Inclusive Christmas Dish

This activity functions as a playful entry point into serious themes such as inclusive design, Universal Design for Learning (UDL), and later, AI-supported accessibility. The idea is simple. Participants are divided into small groups and asked to design and draw a “Christmas dish” that everyone at the table can enjoy. However, there is one crucial rule: no words are allowed. Communication must happen exclusively through icons and drawings. Each group also receives several constraints, such as nut allergy, gluten-free requirement, limited fine motor skills, low vision, language barriers, or cultural differences. The dish must accommodate all assigned conditions from the outset.

The task unfolds in three stages. First, groups sketch the dish and show how it is served. Second, they integrate their specific constraints into the design. Third, they ensure the presence of four mandatory inclusive features: visual ingredient transparency, adaptable portions, low-barrier eating (e.g. easy handling), and a calm or low-sensory option. After a short design phase, groups present their solution in one minute, explaining how their design removes barriers.

What appears at first as a festive drawing exercise quickly becomes a miniature design sprint. Participants realise that inclusion is not about adding special solutions later, but about structuring the experience differently from the start. The “no words” rule highlights the importance of multimodal communication. The constraint cards shift attention from the “average user” to real, diverse individuals. The mandatory features require anticipatory thinking rather than reactive adjustments.

Educationally, the activity achieves several outcomes. It develops empathy by forcing participants to design for people with different needs. It strengthens systems thinking, as groups must coordinate health, communication, cultural and physical aspects simultaneously. It models UDL principles in practice, particularly multiple means of representation and action. It also prepares participants conceptually for AI-supported inclusion, since the transition to discussing adaptive texts, translation tools, or text-to-speech features becomes natural and grounded in lived experience rather than in abstract compliance language.

Most importantly, the activity demonstrates that inclusive design is not a technical add-on but a mindset. By starting with markers and paper instead of software, participants first internalise the logic of inclusion. Only afterwards does the conversation move toward how AI can scale and support these same principles responsibly within vocational education and training contexts.

In this sense, the “Inclusive Christmas Dish” is more than an icebreaker. It is a condensed rehearsal of inclusive pedagogy in action.

4. International Christmas Carol

The “International Christmas Carol” is a collaborative icebreaker designed for international groups, combining creativity, multilingualism, and responsible use of artificial intelligence. While it appears playful on the surface, the activity is structured to demonstrate how AI can meaningfully support inclusive and collaborative learning – without replacing the human work of negotiating meaning.

The idea is simple: participants work in groups of five, with each member representing one project language (Croatian, German, Lithuanian, Polish, and Romanian). Their task is to co-create a short Christmas carol that includes all five languages. Each participant becomes the “guardian” of their language, ensuring authenticity and equal representation.

The process unfolds in two stages. First, participants use ChatGPT or Gemini to generate simple, singable lyrics. The prompt clearly requires that each verse contain one line in each language, with accessible vocabulary and a joyful tone. AI supports the drafting process, but final decisions remain human. Participants discuss, adapt, and approve the text together, correcting awkward literal translations and making sure each language is present as more than decorative “token” lines.

In the second stage, the finalised lyrics are entered into Suno AI, which generates a musical version of the carol. By adding a style prompt such as “warm European Christmas carol with bells and light choir,” groups quickly receive a complete song. The result is not expected to be perfect. In fact, slight linguistic imperfections and varied accents often enhance the experience, reinforcing that the goal is collaboration rather than polish. In our case, even the “rough edges” helped: they gave us something concrete to talk about when we later discussed AI output quality and the difference between novelty and usefulness.

Educationally, the outcomes are significant. The activity lowers language anxiety, especially in multilingual settings. It models ethical AI use by keeping human agency central. It demonstrates Universal Design for Learning principles through multiple forms of expression – text, music, and performance. Most importantly, it turns diversity into a shared resource rather than a barrier.

As a capstone-style exercise, the International Christmas Carol shows that AI in education is not about automation or spectacle. It is about intentional design. When pedagogy leads and AI supports, even a simple holiday song can become a meaningful example of inclusive, creative, and responsible digital practice. – Initial song example.

Conclusion and invitation

If you have tried any of these activities (or built your own variation), I would genuinely like to hear how it worked in your context. What landed well with your learners, what felt awkward, and what did you change to make it fit your group culture, time constraints, or subject area?

Feel free to share a short description of your implementation, the prompts or tools you used (Gemini, NotebookLM, Translate voice mode, Suno, or alternatives), and any “watch-outs” you discovered along the way. The aim here is not to collect perfect success stories, but to build a practical pool of real-world adaptations that other VET and adult educators can lift, remix, and improve.

How to cite: Hajdarovic, M. (2026). GenAI ice breakers in VET training. EPALE. URL: https://epale.ec.europa.eu/en/blog/genai-ice-breakers-vet-training